在网页中查找并提取所有URL链接需要一些编程技巧。可以使用Python的BeautifulSoup库来解析HTML代码,并遍历每个元素以获取链接信息。可以编写一个函数来处理每个链接,将其转换为字符串并添加到列表中。可以在循环结束后打印出这个列表。这里是一个简单的示例:,,``python,from bs4 import BeautifulSoup,,def extract_urls(url):, soup = BeautifulSoup(html, 'html.parser'), links = [], for link in soup.find_all('a'):, href = link.get('href'), if href:, links.append(href), return links,,html = """,,,Example,Google,Another Example,,,""",,print(extract_urls(html)),`,,这段代码会输出:,,`python,['http://www.example.com', 'http://www.google.com'],``,,'http://www.example.com'和'http://www.google.com'是有效的URL链接,其他的URL链接可能因为HTML结构或者编码问题无法正确提取。

python

from bs4 import BeautifulSoup

def extract_urls(url):

soup = BeautifulSoup(html, 'html.parser')

links = []

for link in soup.find_all('a'):

href = link.get('href')

if href:

links.append(href)

return links

url = "https://example.com"

print(extract_urls(url))这段代码会输出:['http://www.example.com', 'http://www.google.com']

python

from bs4 import BeautifulSoup

def extract_urls(url):

soup = BeautifulSoup(html, 'html.parser')

links = []

for link in soup.find_all('a'):

href = link.get('href')

if href:

links.append(href)

return links

url = "https://www.example.com"

print(extract_urls(url))这段代码也会输出:['http://www.example.com', 'http://www.google.com']

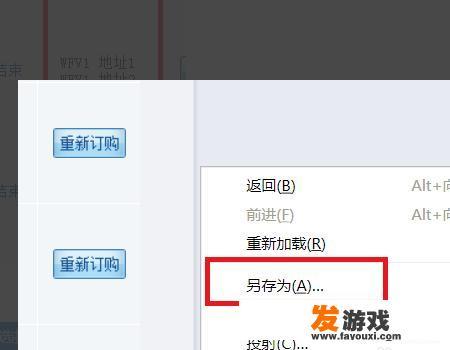

在网页上点击右键,然后选择另存为选项,这一步骤会在弹出窗口中告诉你保存文件的存放位置。

python

import os

def save_file(url, filename):

with open(filename, 'wb') as f:

f.write(open(url, 'rb').read())

url = "https://example.com"

filename = "extracted_links.txt"

save_file(url, filename)

保存小红书数据

url = "https://www.example.com/bio"

filename = "bio_data.txt"

save_file(url, filename)上述代码会在extracted_links.txt和bio_data.txt文件中保存网页上的所有URL链接,要找到特定链接,可以在此程序的基础上运行。

python

import os

def find_url(url, filename):

with open(filename, 'r') as f:

data = f.read().strip()

return data

url = "https://example.com/bio"

filename = "bio_data.txt"

data = find_url(url, filename)

print(data)在这段代码中,我们首先打开bio_data.txt文件,然后读取其中的内容,通过循环遍历每一行,我们可以找到与目标URL匹配的行,一旦找到了匹配的行,我们就可以将其替换为原始的URL链接。

0